How Do You Know if a Study Had Enough Power

Statistical Ability and Why Information technology Matters | A Unproblematic Introduction

Statistical power, or sensitivity, is the likelihood of a significance examination detecting an outcome when at that place actually is one.

A truthful result is a real, non-zero relationship between variables in a population. An outcome is unremarkably indicated past a real difference between groups or a correlation betwixt variables.

High power in a study indicates a large chance of a exam detecting a true issue. Low power means that your exam only has a small risk of detecting a true consequence or that the results are likely to be distorted by random and systematic error.

Power is mainly influenced past sample size, effect size, and significance level. A ability analysis can be used to determine the necessary sample size for a report.

Why does ability affair in statistics?

Having plenty statistical power is necessary to draw authentic conclusions about a population using sample data.

In hypothesis testing, you outset with a null hypothesis of no result and an culling hypothesis of a true effect (your actual research prediction).

The goal is to collect enough data from a sample to statistically test whether you can reasonably decline the null hypothesis in favor of the alternative hypothesis.

- Null hypothesis: Spending 10 minutes daily outdoors in a natural environment has no effect on stress in recent college graduates.

- Alternative hypothesis: Spending 10 minutes daily outdoors in a natural environment will reduce symptoms of stress in contempo college graduates.

There's ever a adventure of making one of two determination errors when interpreting report results:

- Type I error: rejecting the null hypothesis of no effect when it is actually true.

- Type Ii error: not rejecting the null hypothesis of no effect when it is actually faux.

- Type I mistake: yous conclude that spending 10 minutes in nature daily reduces stress when it really doesn't.

- Blazon Ii fault : you lot conclude that spending x minutes in nature daily doesn't impact stress when information technology actually does.

Power is the probability of avoiding a Type 2 error. The college the statistical power of a exam, the lower the risk of making a Type II error.

Power is commonly set at 80%. This means that if there are true effects to exist constitute in 100 dissimilar studies with lxxx% power, simply fourscore out of 100 statistical tests will actually detect them.

If yous don't ensure sufficient power, your study may non be able to discover a truthful effect at all. This means that resource like time and money are wasted, and it may even be unethical to collect data from participants (especially in clinical trials).

On the flip side, also much ability ways your tests are highly sensitive to true effects, including very pocket-size ones. This may atomic number 82 to finding statistically significant results with very little usefulness in the existent world.

To residuum these pros and cons of depression versus high statistical power, you should use a ability analysis to ready an advisable level.

What is a power analysis?

A power analysis is a calculation that helps you determine a minimum sample size for your study.

A power analysis is fabricated upwardly of four main components. If yous know or have estimates for any three of these, you tin can calculate the 4th component.

- Statistical power: the likelihood that a test volition notice an effect of a certain size if there is one, usually set at 80% or higher.

- Sample size: the minimum number of observations needed to notice an effect of a certain size with a given ability level.

- Significance level (alpha) : the maximum risk of rejecting a true null hypothesis that y'all are willing to take, usually set at 5%.

- Expected effect size: a standardized manner of expressing the magnitude of the expected result of your study, unremarkably based on like studies or a pilot study.

Earlier starting a study, y'all can utilize a ability analysis to calculate the minimum sample size for a desired power level and significance level and an expected effect size.

Traditionally, the significance level is set to v% and the desired power level to 80%. That ways you only need to figure out an expected consequence size to calculate a sample size from a power analysis.

To summate sample size or perform a power analysis, apply online tools or statistical software like K*Power.

Sample size

Sample size is positively related to power. A minor sample (less than 30 units) may but take low power while a large sample has loftier power.

Increasing the sample size enhances power, only only up to a point. When you take a big enough sample, every observation that'south added to the sample only marginally increases ability. This means that collecting more data will increase the time, costs and efforts of your report without yielding much more than benefit.

Your research pattern is also related to power and sample size:

- In a within-subjects design, each participant is tested in all treatments of a written report, so individual differences will not unevenly affect the outcomes of different treatments.

- In a between-subjects design, each participant merely takes part in a single handling, so with dissimilar participants in each treatment, in that location is a run a risk that individual differences can affect the results.

A within-subjects blueprint is more than powerful, and so fewer participants are needed. More participants are needed in a betwixt-subjects blueprint to establish relationships between variables.

Significance level

The significance level of a study is the Type I fault probability, and information technology'southward usually set at five%. This means your findings have to accept a less than 5% chance of occurring nether the null hypothesis to exist considered statistically meaning.

Significance level is correlated with ability: increasing the significance level (e.g., from 5% to x%) increases power. When you decrease the significance level, your significance test becomes more than conservative and less sensitive to detecting true effects.

Researchers have to residuum the risks of committing Type I and Two errors by considering the amount of run a risk they're willing to take in making a false positive versus a false negative determination.

Effect size

Effect size is the magnitude of a divergence betwixt groups or a relationship between variables. It indicates the practical significance of a finding.

While high-powered studies tin help you detect medium and large furnishings in studies, low-powered studies may only grab big ones.

To determine an expected effect size, you perform a systematic literature review to notice like studies. You narrow downwardly the listing of relevant studies to only those that dispense fourth dimension spent in nature and use stress as a chief measure.

For the five studies that meet these criteria, you take each of their reported event sizes and compute a mean effect size. Yous have this mean as your expected effect size.

At that place'due south always some sampling error involved when using data from samples to make inferences well-nigh populations. This ways there's always a discrepancy between the observed outcome size and the true effect size. Effect sizes in a study can vary due to random factors, measurement error, or natural variation in the sample.

Low-powered studies will mostly detect truthful effects only when they are big in a report. That ways that, in a low-powered study, any observed event is more likely to be boosted past unrelated factors.

If low-powered studies are the norm in a item field, such every bit neuroscience, the observed outcome sizes volition consistently exaggerate or overestimate true effects.

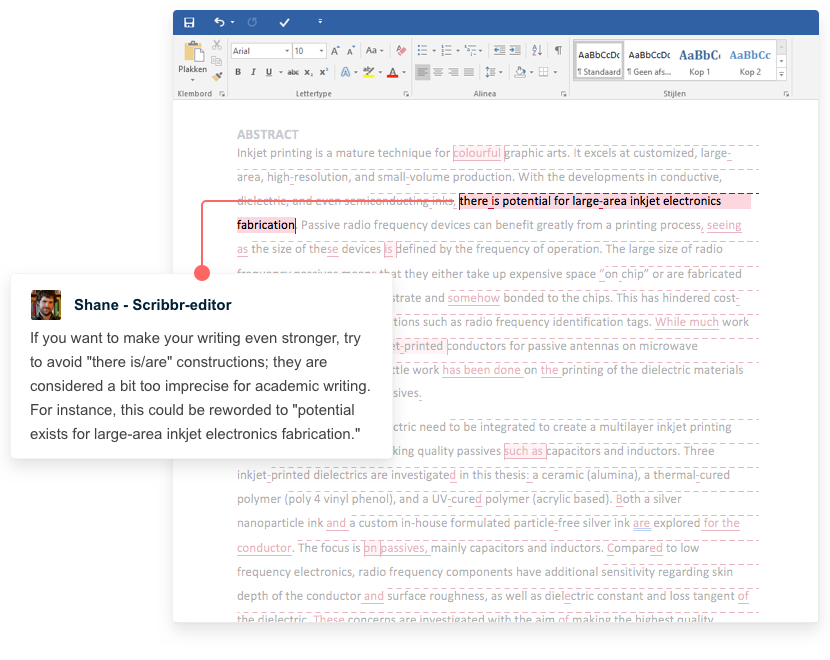

Receive feedback on language, construction and formatting

Professional editors proofread and edit your paper past focusing on:

- Bookish way

- Vague sentences

- Grammer

- Style consistency

See an instance

Other factors that affect power

Bated from the four major components, other factors need to be taken into business relationship when determining ability.

Variability

The variability of the population characteristics affects the power of your test. High population variance reduces power.

In other words, using a population that takes on a large range of values for a variable will lower the sensitivity of your test, while using a population where the variable is relatively narrowly distributed volition heighten the sensitivity of the examination.

Using a adequately specific population with defined demographic characteristics can lower the spread of the variable of interest and ameliorate power.

Measurement error

Measurement fault is the difference between the truthful value and the observed or recorded value of something. Measurements tin can just be as precise as the instruments and researchers that measure them, so some error is almost always present.

The college the measurement mistake in a study, the lower the statistical power of a test. Measurement error can be random or systematic:

- Random errors are unpredictable and unevenly alter measurements due to chance factors (eastward.g., mood changes can influence survey responses, or having a bad day may lead to researchers misrecording observations).

- Systematic errors touch on data in predictable ways from one measurement to the side by side (east.g., an incorrectly calibrated device will consistently tape inaccurate information, or problematic survey questions may lead to biased responses).

How do you increase power?

Since many research aspects directly or indirectly influence power, at that place are various means to improve power. While some of these tin usually be implemented, others are plush or involve a tradeoff with other of import considerations.

Increment the outcome size. To increase the expected effect in an experiment, you could manipulate your independent variable more than widely (east.one thousand., spending 1 hour instead of ten minutes in nature) to increase the effect on the dependent variable (stress level). This may non always exist possible considering there are limits to how much the outcomes in an experiment may vary.

Increase sample size. Based on sample size calculations, you may take room to increase your sample size while still meaningfully improving power. Simply there is a point at which increasing your sample size may not yield loftier enough benefits.

Increase the significance level. While this makes a test more than sensitive to detecting truthful furnishings, it too increases the risk of making a Type I error.

Reduce measurement error. Increasing the precision and accurateness of your measurement devices and procedures reduces variability, improving reliability and power. Using multiple measures or methods, known as triangulation, can too help reduce systematic bias.

Utilize a one-tailed exam instead of a two-tailed examination. When using a t exam or z tests, a i-tailed test has higher power. However, a one-tailed examination should merely be used when at that place'south a stiff reason to expect an consequence in a specific direction (east.1000., one hateful score volition exist college than the other), considering it won't be able to detect an effect in the other direction. In contrast, a two-tailed test is able to detect an consequence in either management.

Frequently asked questions almost statistical power

- What is statistical ability?

-

In statistics, ability refers to the likelihood of a hypothesis examination detecting a truthful effect if at that place is one. A statistically powerful test is more likely to reject a false negative (a Type II error).

If you don't ensure enough power in your written report, yous may not be able to discover a statistically significant consequence even when information technology has practical significance. Your study might not have the ability to respond your research question.

- What is statistical significance?

-

Statistical significance is a term used past researchers to state that information technology is unlikely their observations could have occurred under the cipher hypothesis of a statistical test. Significance is usually denoted by a p-value, or probability value.

Statistical significance is arbitrary – information technology depends on the threshold, or alpha value, chosen by the researcher. The most mutual threshold is p < 0.05, which ways that the information is likely to occur less than 5% of the time under the nil hypothesis.

When the p-value falls below the chosen alpha value, then we say the upshot of the test is statistically meaning.

- What is a ability analysis?

-

A power analysis is a calculation that helps yous determine a minimum sample size for your study. It's made up of four main components. If you know or have estimates for any three of these, you can calculate the fourth component.

- Statistical power: the likelihood that a test will notice an effect of a certain size if there is ane, usually set at 80% or higher.

- Sample size: the minimum number of observations needed to detect an effect of a certain size with a given power level.

- Significance level (alpha): the maximum risk of rejecting a truthful null hypothesis that you are willing to take, usually set at v%.

- Expected effect size: a standardized way of expressing the magnitude of the expected result of your study, usually based on similar studies or a pilot report.

- How do you increase statistical power?

-

There are various ways to improve power:

- Increase the potential effect size by manipulating your contained variable more strongly,

- Increment sample size,

- Increment the significance level (alpha),

- Reduce measurement error by increasing the precision and accurateness of your measurement devices and procedures,

- Use a one-tailed test instead of a two-tailed examination for t tests and z tests.

Is this article helpful?

Yous accept already voted. Thank you :-) Your vote is saved :-) Processing your vote...

Source: https://www.scribbr.com/statistics/statistical-power/

0 Response to "How Do You Know if a Study Had Enough Power"

Post a Comment